The conversation around Claude Code's open-source ecosystem keeps getting framed as a fight over the shell.

I think that is the least interesting part of the story.

If this were only about cloning a terminal interface, I would not care very much. Interfaces age quickly. They get copied, polished, replaced, and forgotten. The thing that lasts longer is the layer around them: the memory system, the routing logic, the tool surface, the approval rules, and the habits an operator builds on top.

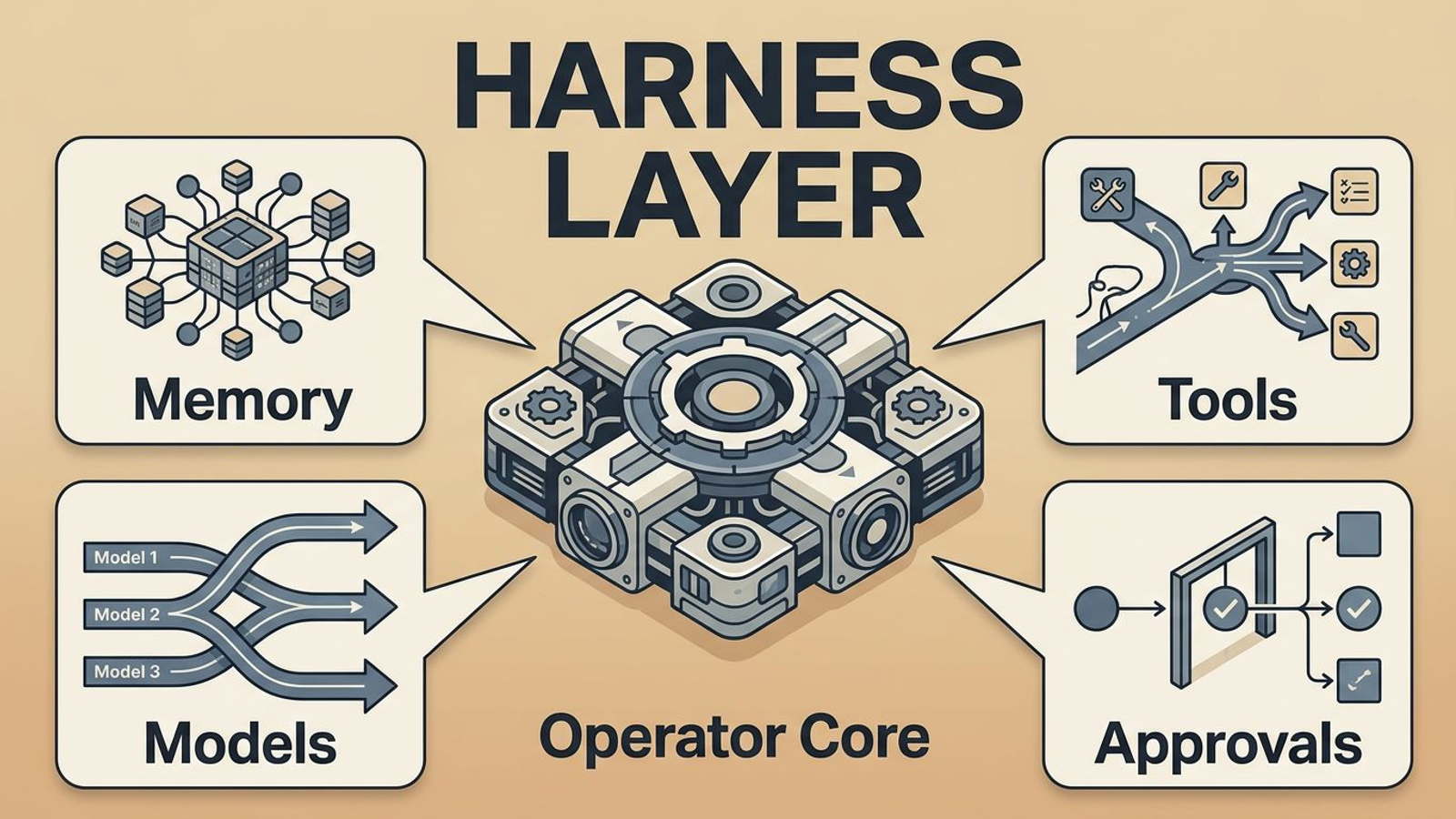

That is why I keep coming back to the same conclusion: Claude Code's open-source ecosystem matters because it is turning into a harness layer.

By harness layer, I mean the working layer between the operator and the raw models. It is where memory gets recalled, where tools get routed, where models can be swapped, and where the workflow starts to feel like a durable system instead of a series of one-off prompts.

That is the layer I would bet on.

Claude Code's open-source ecosystem is not interesting because it is open

It is interesting because it gives builders a programmable operator surface.

One of the clearest summaries of that shift came from a tweet arguing that workflows themselves are disposable and that the durable layer is the harness around models, tools, and knowledge.

I think that framing is more useful than most of the "who copied what" commentary around these tools.

If the shell is the headline, you end up debating cosmetics. If the harness is the point, you start asking better questions:

- what survives a model swap,

- what preserves operator intent,

- what gets remembered,

- and what compounds after the first run.

That is where the real leverage is.

The shell is where the demo starts. The harness is where the business starts.

Memory stops being a feature and becomes infrastructure

The strongest second signal is memory.

What I like about that memory-catcher example is that it treats memory as a system concern, not a novelty checkbox. The point is not "look, the AI remembers me." The point is that recall can be shaped by what actually gets used, what gets revisited, and what keeps proving valuable over time.

That is a very different level of ambition.

Once memory becomes infrastructure, the product question changes. I stop asking whether a tool "supports memory" in the abstract. I start asking:

- what gets stored,

- what gets surfaced,

- what gets ignored,

- and how the memory layer improves after repeated use.

That is the same reason I care about source tracking in my content workflow. If I save ten useful tweets and articles, I do not want to keep rediscovering or reusing them blindly. I want the system to know what I have already caught up on, what I drafted from, and what should stay out of the next piece unless I explicitly revive it.

That is not product polish. That is operational infrastructure.

Orchestration inside Claude Code is the bigger tell

The council-style experiments are the next clue.

That tweet is interesting to me because it is really about workflow structure. A council, a chairman, and an operator choosing the path of a decision are all signs that the surface is shifting from "ask one model one thing" toward "run a process through a controllable system."

That is what a harness layer looks like when it starts growing up.

The value is no longer just model output quality. The value becomes:

- how work is decomposed,

- how roles are assigned,

- how clarifying questions get injected,

- and how much control the operator keeps while all of that happens.

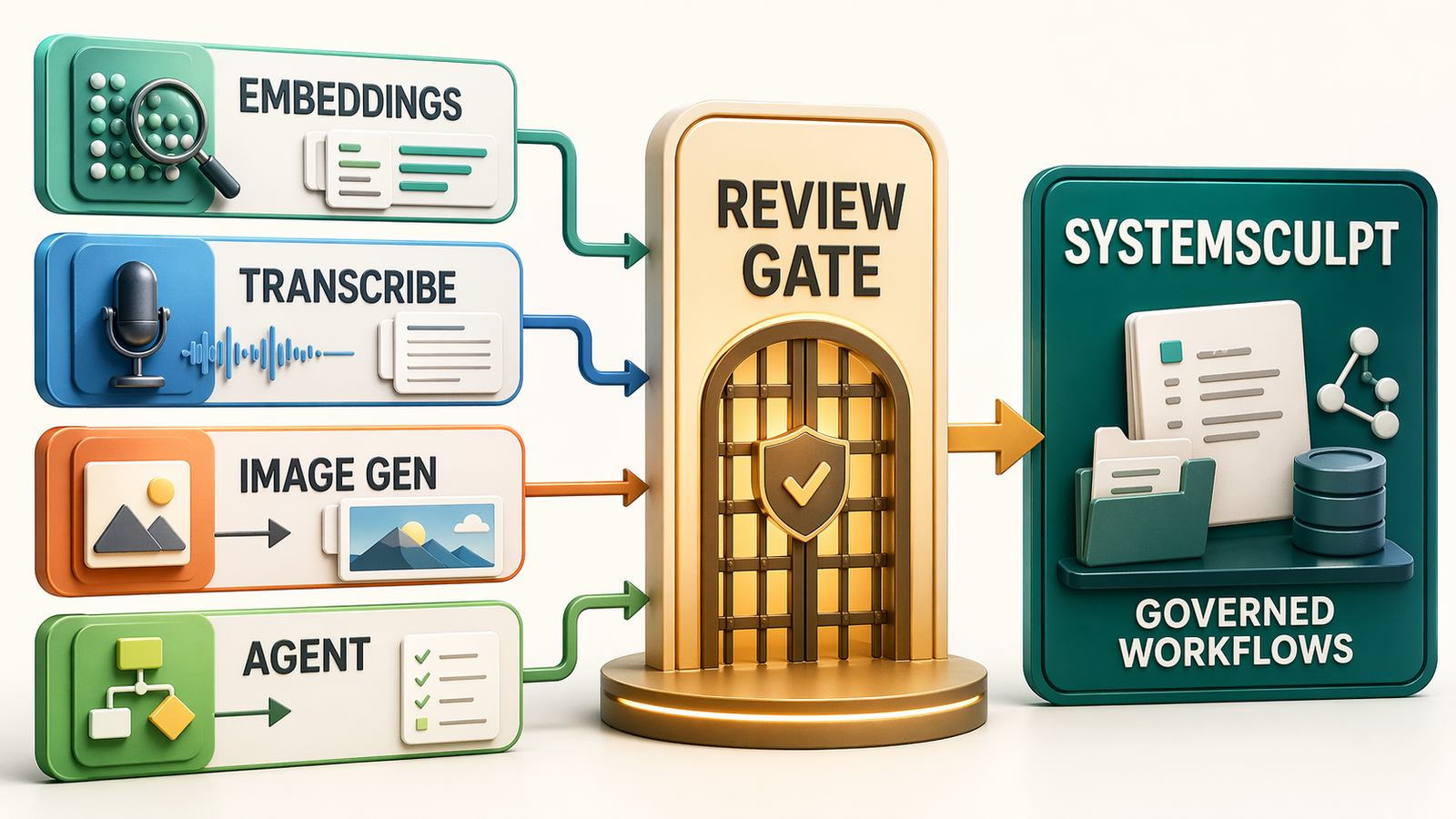

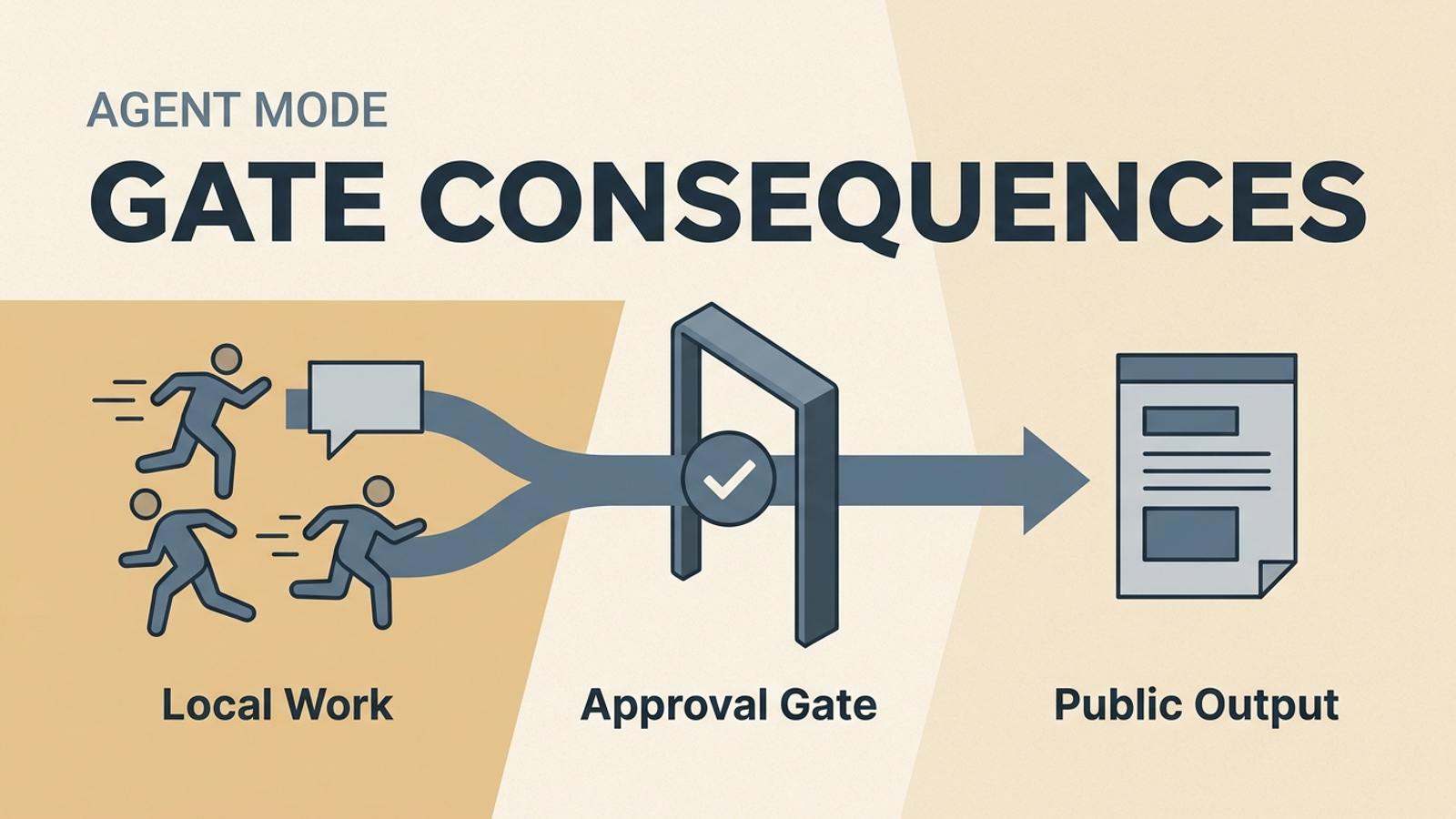

This is also why I keep pushing approval-first automation. A stronger harness without stronger checkpoints is just a faster way to make confident mistakes. If you want a practical way to structure those checkpoints, I would start with the Project Spec Workflow and the Weekly AI Risk Review, because both force the workflow to become legible before it becomes automatic.

Model switching changes the economics of the surface

The last signal in this cluster is model portability.

Once people can move different models into the same Claude Code surface with less friction, the center of gravity changes again. The interface matters less. The harness matters more.

That does not mean model quality becomes irrelevant. It means the stack gets reordered.

Model quality still matters. But operator flow matters too. And once memory, tool routing, and approvals are stable, those layers can start to matter more than the exact model sitting underneath on a given day.

That is strategically important because a good harness can outlive a model cycle.

If I can preserve the same working habits, the same approval logic, the same retrieval layer, and the same tool surface while swapping models underneath, I have a much more durable system than I had when everything depended on one provider path staying dominant.

The mistake I would avoid

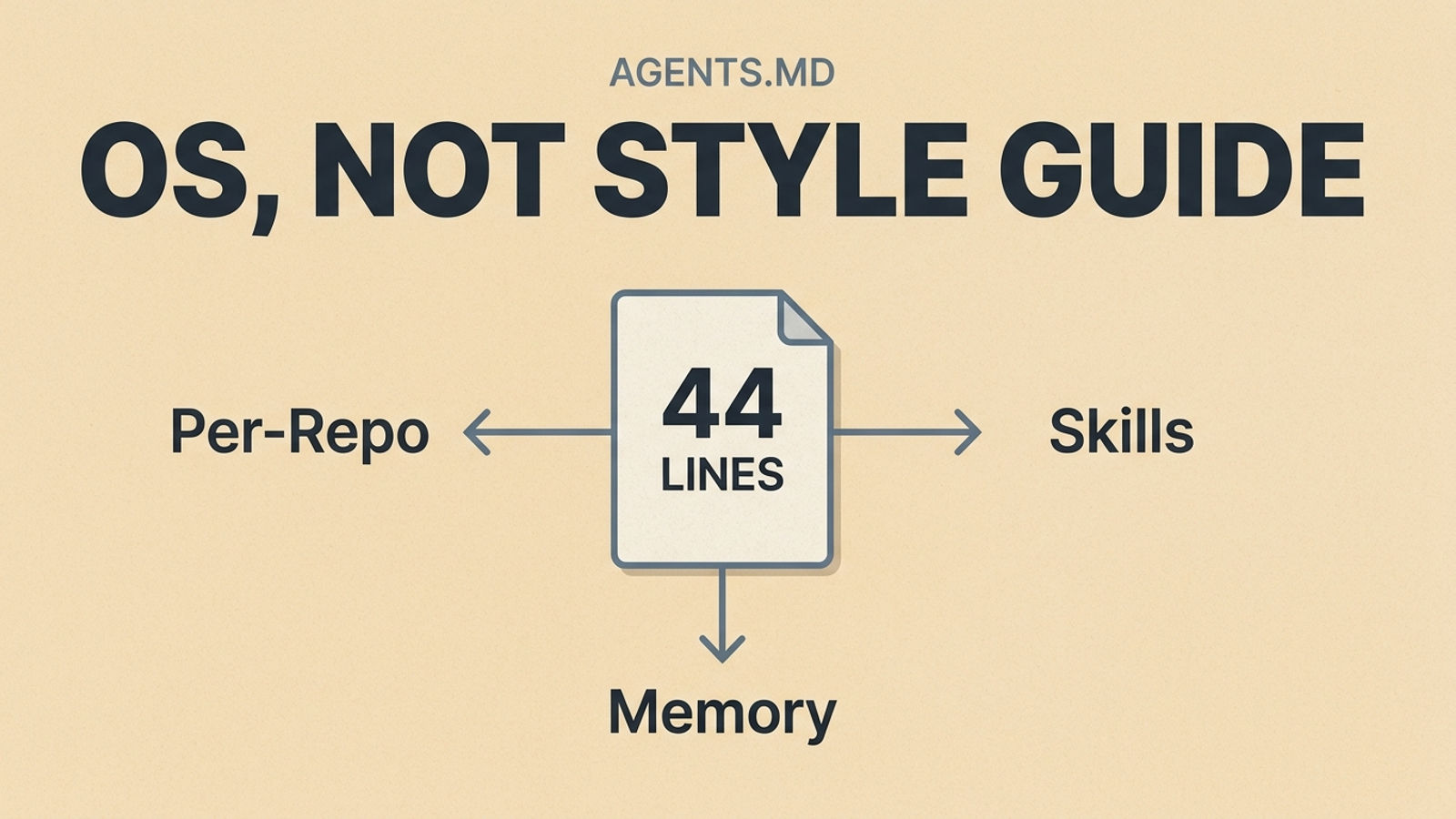

I would not build around "one magical prompt" and call it architecture.

That is the easiest trap in this category. A clever prompt can produce a compelling demo. It can even feel like product progress for a while. But if the memory layer is vague, the routing is ad hoc, the approval model is missing, and the operator cannot inspect what happened, the system is still fragile.

That is why I like keeping the documentation, workflow specs, and operating rules close to the work itself. My notes become the evidence layer. My prompts become procedures. My review steps become control points. If you want the setup path behind that kind of loop, the SystemSculpt Docs are the best place to start before trying to automate everything at once.

My checklist for a real harness layer

Before I would call any stack in Claude Code's open-source ecosystem production-worthy, I would want these five things to be true:

- Memory is explicit, inspectable, and tied to real usage instead of vague personalization.

- Model routing can change without forcing a rewrite of the whole workflow.

- High-impact actions still pass through approvals and review checkpoints.

- Source usage is logged so the system does not keep recycling the same context blindly.

- There is a weekly review loop for drift, failure modes, and wasted complexity.

If those five are not true yet, I do not think I have a serious harness layer.

I think I have an interesting demo.

Why this matters to builders now

People exploring Claude Code's open-source ecosystem are often looking for the tool.

I think the more important question is the layer.

The market is telling us it wants:

- better memory,

- better orchestration,

- better model portability,

- and a more durable working surface around all three.

That changes what I would build next. I would spend less time polishing one-shot prompting and more time designing the harness: the retrieval rules, the approval rules, the routing rules, and the review loops that make the system better after week six, not just impressive on day one.

If you want help turning that kind of harness into a real workflow instead of another AI demo, you can book the workflow build path or start with SystemSculpt Pro. I care much more about making the loop durable than making the first run look magical.