One of the best changes I made this week was deleting a large chunk of my AI workflow.

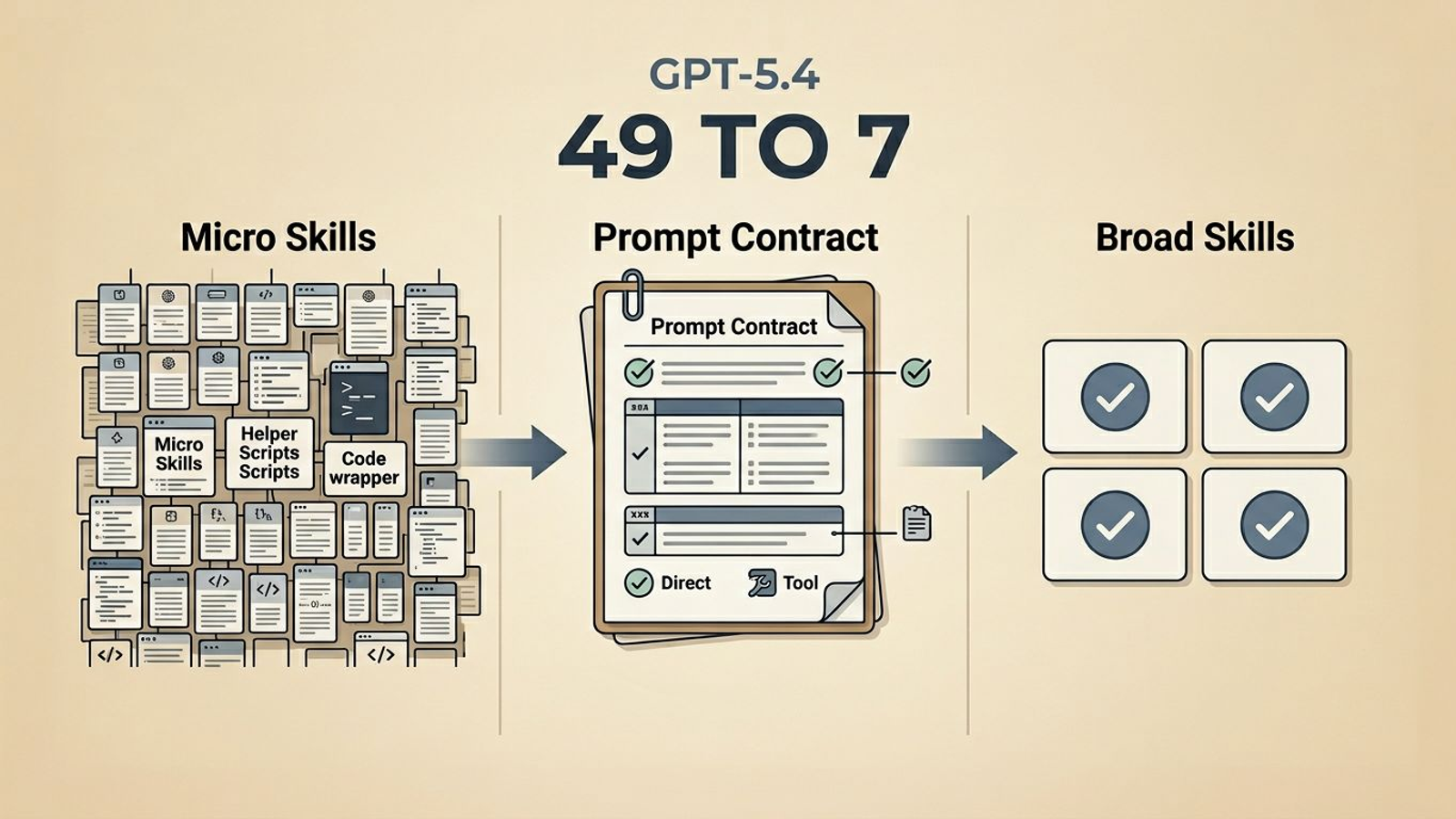

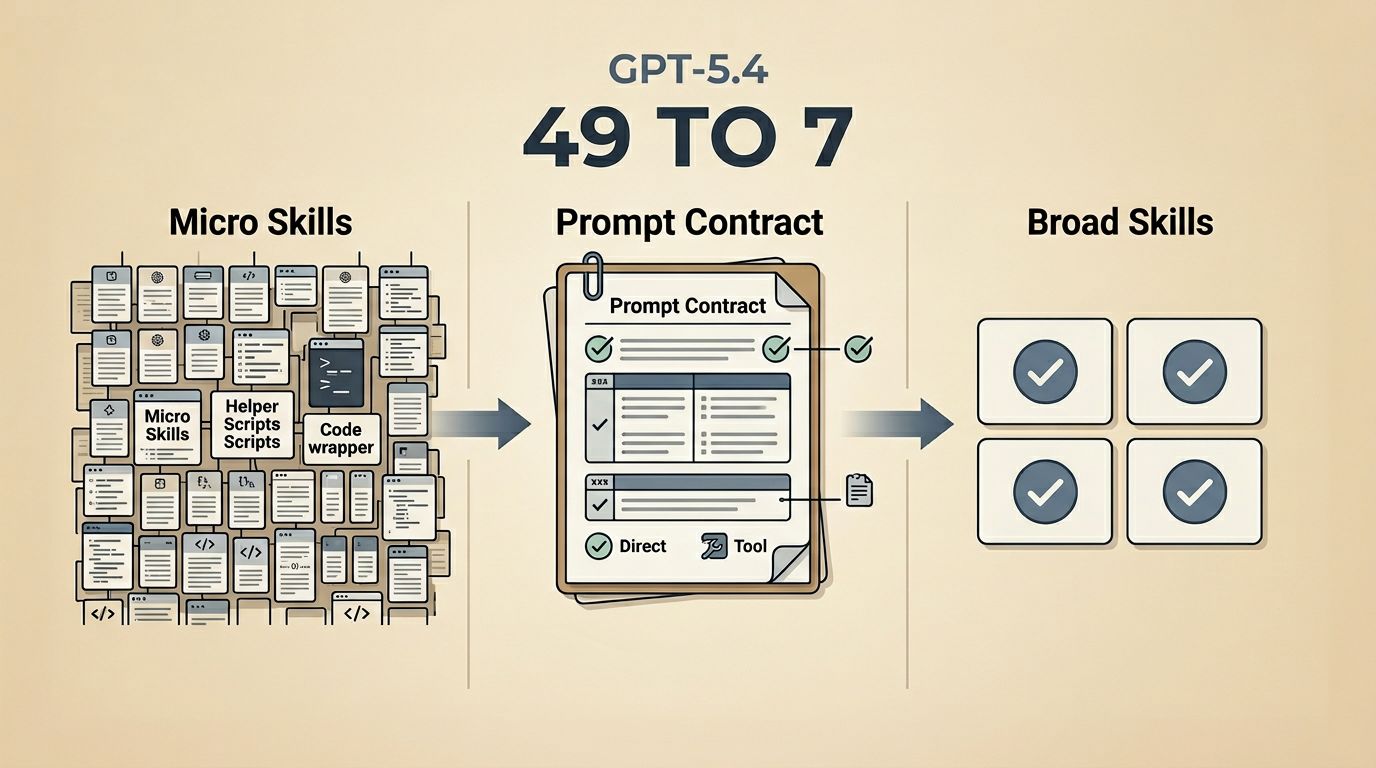

I had 49 skills.

That sounds organized until you actually live inside it. In practice, it meant too many tiny wrappers, too many narrow lanes, and too many little bits of logic built around older model behavior. The system looked disciplined, but a lot of that discipline had turned into drag.

So I cut it down hard.

I converted the whole thing to prompt-only skills, removed the bundled helper junk, merged the overlaps, and kept pruning until the tree went from 49 skills to 18, then from 18 to 6. After that I added back exactly one broad skill I actually wanted to keep using: blog-post.

That left me with 7 total skills.

That number is not the point. The point is what changed when I stopped treating every edge case like it needed its own little box.

For serious work, GPT-5.4 is the model I want to build around right now.

I do not mean that as hype. I mean that when the work actually matters and I care about follow-through, tool discipline, evidence, and long-running reliability, GPT-5.4 is the first model in a while that makes me want to remove structure instead of adding more of it. OpenAI's prompt guidance for GPT-5.4 lines up almost perfectly with what I had been feeling in practice: the future is not less prompting, it is better prompting.

The problem was not too little structure

The problem was the wrong kind of structure.

It is very easy to make an AI workflow look smart for one week. You give it a narrowly named skill, a bundled helper script, a few hidden assumptions, and a path that works beautifully for the exact demo you had in mind. Then real work shows up. The task changes shape. The environment changes. The model gets better. Suddenly the workflow is not helping much. It is just getting in the way.

That was the state I was in. I did not have a structure problem. I had a fragmentation problem.

Too many of my skills were really just tiny cages. They were trying to tell the model exactly how to move instead of telling it what good work looked like. They kept narrowing the lane until the lane itself started becoming the product.

That gets stale fast.

GPT-5.4 changed what useful prompting looks like

What stood out to me in the official GPT-5.4 guidance was not some magical trick. It was how consistently the advice pushed toward clear contracts instead of extra scaffolding.

The same points kept showing up:

- make the output contract explicit

- define what completion actually means

- use tools persistently when correctness depends on them

- keep dependency checks visible

- add a verification loop before you call the work done

- treat reasoning effort like a last-mile tuning knob, not the main solution

That is a very different philosophy from "keep writing helper code until the model behaves."

The latest model guide reinforces the same direction. GPT-5.4 is positioned as a production-grade model for long-running, multi-step work. If that is true, then the question changes. I stop asking, "How many little scripts do I need to keep this on the rails?" and start asking, "What is the smallest contract that still gives me trustworthy work?"

That is a much better question.

So I cleaned up the skill tree like I meant it

The 49 skills were not 49 distinct capabilities. A lot of them were just the same capability sliced too thin.

- transport-specific splits

- model-specific splits

- helper-heavy skills with local scripts and reference payloads

- overlapping skills that mostly differed in naming or tool preference

The first move was not to go deeper. It was to simplify the surface.

I cut every active skill down to the actual contract:

SKILL.mdagents/openai.yaml

No bundled scripts/. No bundled references/. No bundled assets/. No generator or validator helpers pretending to be durable architecture.

After that hard cutover, I merged aggressively. Broad domains replaced tiny wrappers. Then I pruned again. The tree went from 49 skills to 18, then from 18 to 6. After that, I added back exactly one new broad skill that I actually wanted in the system: blog-post.

That left me with 7 total skills. That is not a magic number. It is just a much more honest one.

It reflects a stack that is finally trying not to confuse more software with more intelligence.

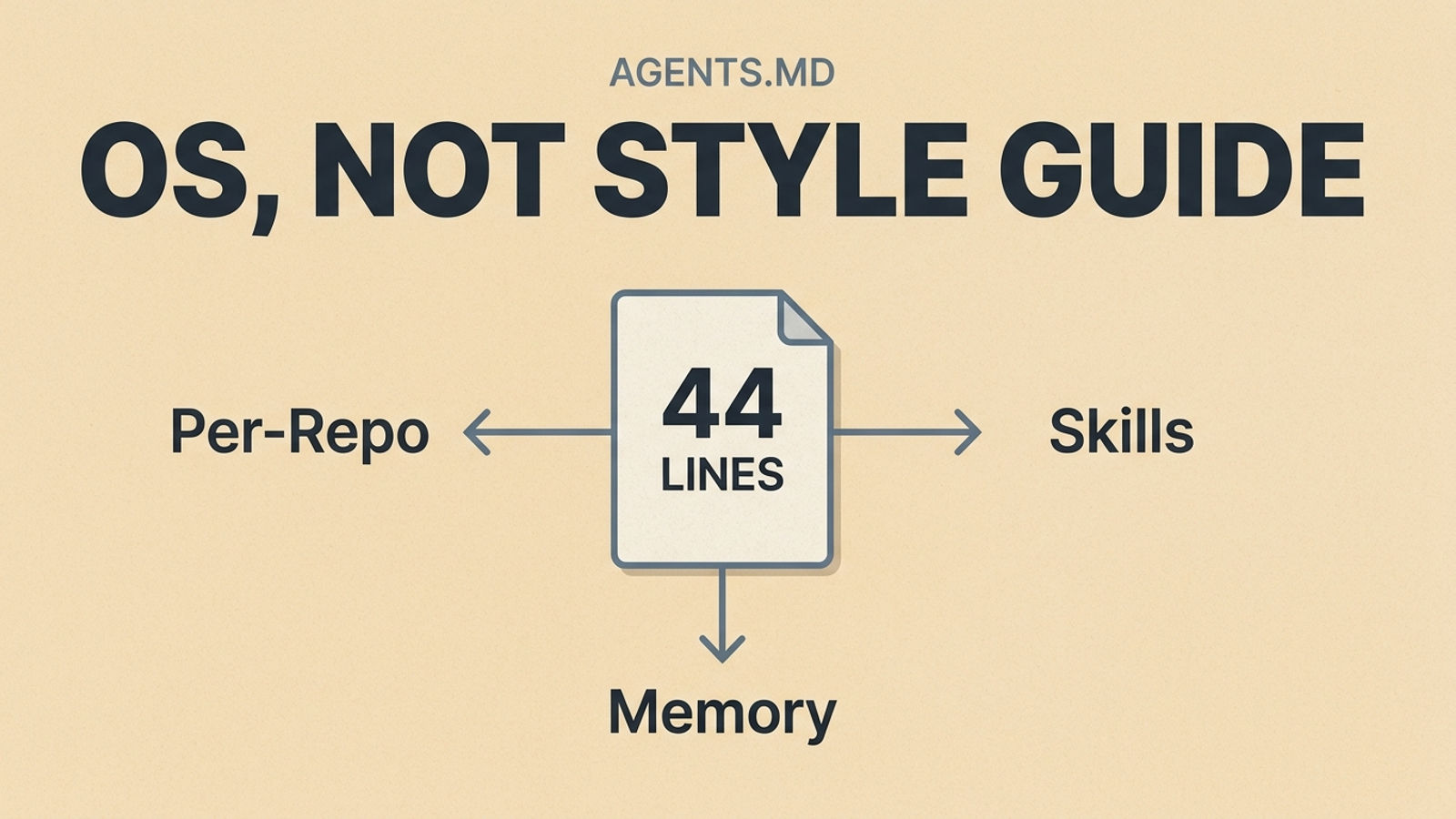

Broad skills are better now

I do not think the lesson is "remove all structure."

That would be lazy and wrong.

The lesson is that structure should move up a level.

A good modern skill should define:

- what the skill is for

- what kind of outcome it should produce

- what sources or tools it should use when correctness matters

- what has to be verified before the result is trusted

- what counts as done

That is the kind of structure GPT-5.4 seems to reward.

A bad modern skill keeps trying to micromanage every sub-step because older models needed more handholding.

I think that is where a lot of agent setups are still behind. They are still organized around an old fear: if I do not box the model into a tiny lane, it will drift.

Sometimes that was true. It is just a worse default now.

Once the model is actually good at long-horizon work, the bigger risk becomes the opposite. The lane gets so tiny that the model cannot generalize, cannot recover, and cannot use the intelligence you are paying for.

This is why I do not want a hundred blogging skills either

The same logic applies to writing.

If I do something worth writing about tomorrow, I do not want a different skill for:

- "write a post about JS REPL work"

- "write a post about Neovim config changes"

- "write a post about prompt architecture"

- "write a post about copy quality"

That is the same trap with nicer labels.

What I want is one broad blog-post skill that can look at real work, ask me a few smart questions, research what needs to be grounded, and turn the result into a publishable draft in my voice.

That is structure at the right level.

The skill should know how to:

- start with the real event or workflow

- have a short back-and-forth with me about angle and tone

- do heavier research when the claims are current or externally grounded

- package the result for the SystemSculpt site by default

- still adapt when I want a different long-form output later

That is much more useful than a pile of tiny blog-writing wrappers.

What still needs to stay explicit

This cleanup was not a vote for being vague.

If anything, it made me more convinced that serious prompting needs to be stricter where it actually matters.

The things I want written down now are:

- output contracts

- follow-through defaults

- tool-use expectations

- dependency checks

- citation and grounding rules

- verification loops

- clear completion criteria

That is where the rigor belongs.

I would much rather have one strong prompt contract that says, "Do the research, ground the claims, keep going until the work is complete, and verify before you stop," than five separate helper scripts that lock the model into stale assumptions.

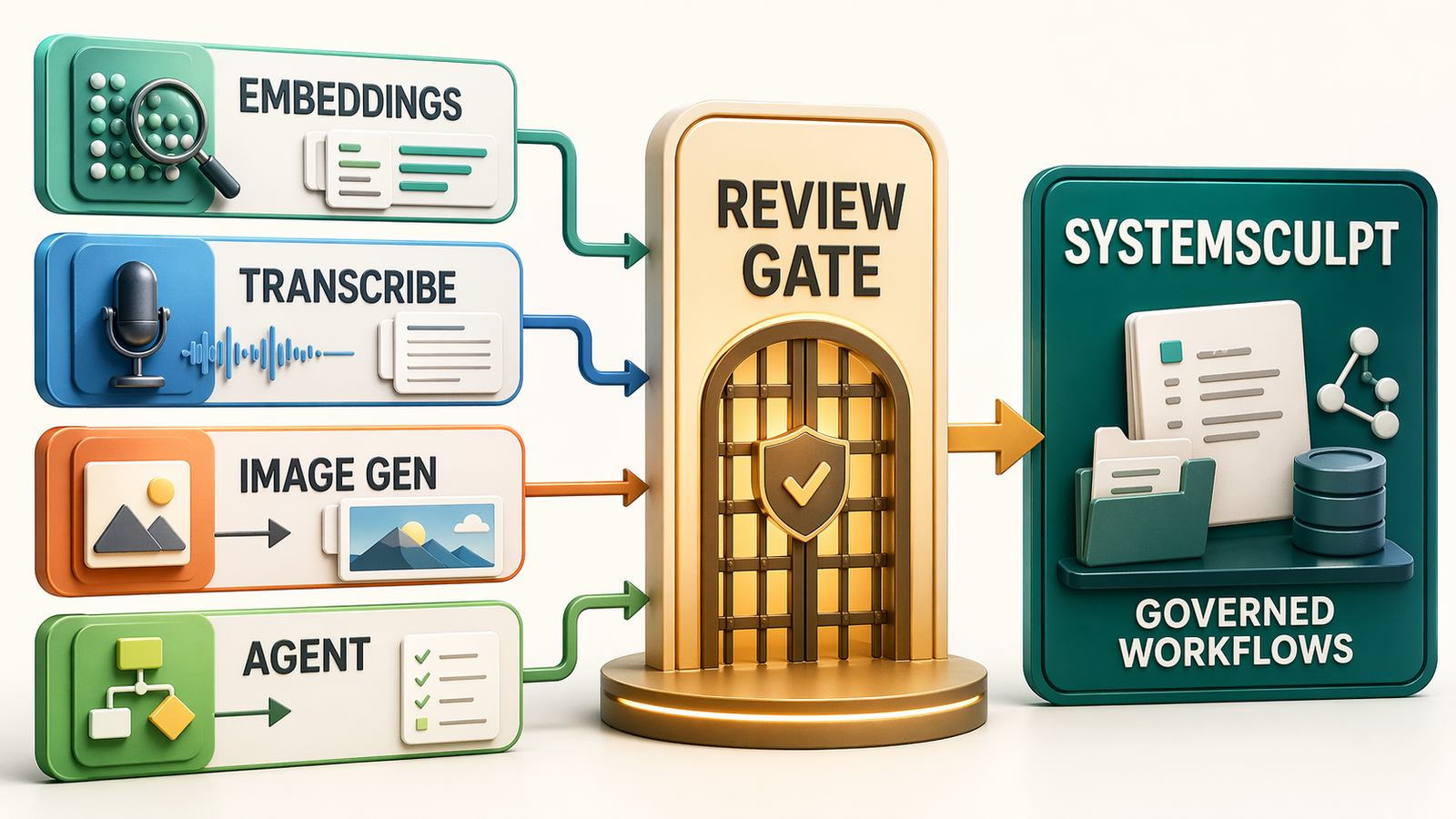

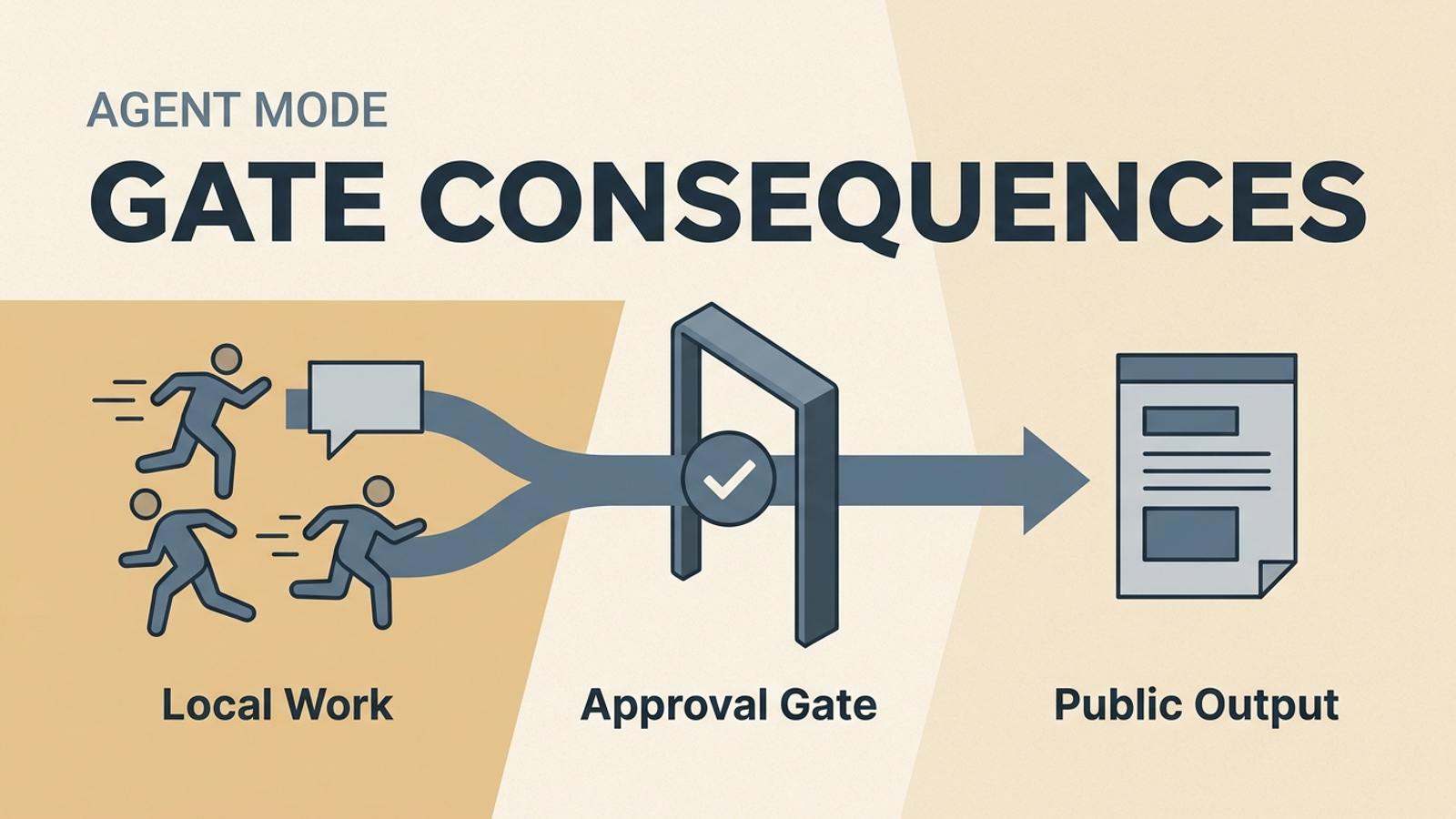

That is also why I still care about explicit review and workflow control in places like the Project Spec Workflow and the Weekly AI Risk Review. Better prompting does not remove the need for control. It puts the control in clearer, more durable places.

The rule I am keeping from here

For serious work, I am building around GPT-5.4-style prompting now.

That means:

- broad skills with strong contracts

- prompt-first behavior instead of helper-script sprawl

- direct native tool use when the model needs to act

- research and citations when the claim is current

- verification before I call the work finished

I expect future frontier models to keep moving in this direction, not away from it. So I do not want my setup optimized around old model weaknesses if that optimization now blocks better behavior.

I want the architecture to age well.

That was the whole point of this cleanup. The goal was not to feel tidy. The goal was to stop wrapping the model in stale assumptions and keep the structure that still compounds.

If you are building AI workflows and they keep getting more complicated without getting more trustworthy, that is usually the sign. You probably do not need another micro-skill. You probably need a better contract.

The next step is deliberately small: choose one repeated workflow, write down what "done" means, and delete one helper or micro-skill that no longer earns its place.

That is the same philosophy behind SystemSculpt Pro, and if you want the self-serve version of that stack without piecing it together manually, start with SystemSculpt Lifetime. If you need a governed implementation path for a real business workflow, that is exactly why I offer the workflow build.

I care a lot less about making the agent look busy than I do about making the workflow stay trustworthy after week six.