The Siri conversation keeps getting framed like a model story.

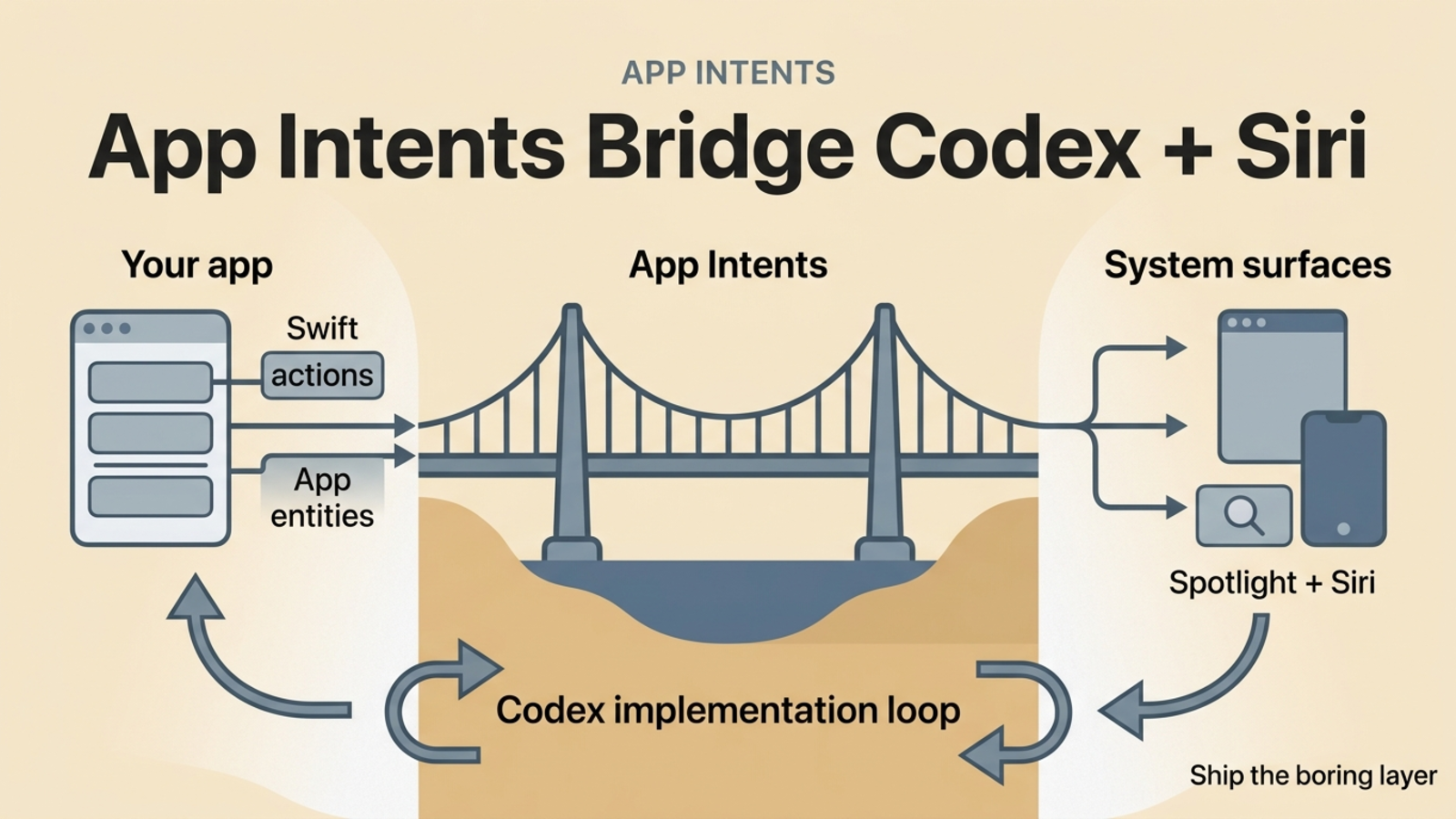

I think it is an app surface story.

Apple gave developers the contract for that surface back at WWDC 2022, when it introduced App Intents. OpenAI made the timing sharper on April 16, 2026, when it published a Codex use case page called Add iOS app intents and, on the same day, expanded Codex into a broader desktop agent in Codex for (almost) everything.

That pairing matters.

App Intents is how an iPhone or Mac learns the verbs and nouns inside your app. Codex is the kind of tool that makes shipping those verbs and nouns a much smaller project than it used to be.

OpenAI is now treating App Intents as a first-class Codex use case, not as obscure Apple plumbing.

Apple already solved the interface problem

The original WWDC22 App Intents deep dive is still the cleanest place to start.

Apple's pitch was practical:

- App Intents are written in Swift

- simple intents can live in the main app target

- the system extracts the metadata at build time

- the same actions can appear in Siri, Shortcuts, Spotlight, and other system entry points

That was the quiet upgrade over the older SiriKit custom-intent world. You no longer had to treat system integration like a second product built beside the app. The action definition could live with the app itself.

Over the next three WWDC cycles, Apple kept widening the surface:

- WWDC24 added Spotlight indexing for entities,

Transferable,IntentFile,FileEntity,URLRepresentableEntity, union values, and framework support improvements. - WWDC25 positioned App Intents even more directly as the framework that spreads app functionality across the system.

- Apple's current iPadOS 26 What's New guide now describes App Intents as the layer behind Siri, Spotlight, widgets, controls, the Action Button, Control Center, and app-specific results inside visual intelligence.

That is a much bigger claim than "you can add a Shortcut."

Here is the shape Apple is asking developers to build:

If your app exposes useful actions and meaningful entities, the system has something to reason over. If it does not, Siri is stuck guessing at a UI.

That last point matters more now because Apple is being explicit about where this is going. Its Siri and Apple Intelligence docs say App Intents is how you express your app's capabilities and content to Siri and Apple Intelligence. The same page also says Siri's personal context understanding, onscreen awareness, and in-app actions are still in development and tied to a future software update. Apple is not promising magic from thin air; it is asking developers to describe their apps in a system-readable way first.

By 2026, Apple is treating App Intents as system infrastructure. The list of supported surfaces keeps expanding.

Codex changes the economics of shipping that surface

This is where the OpenAI page is more important than it first looks.

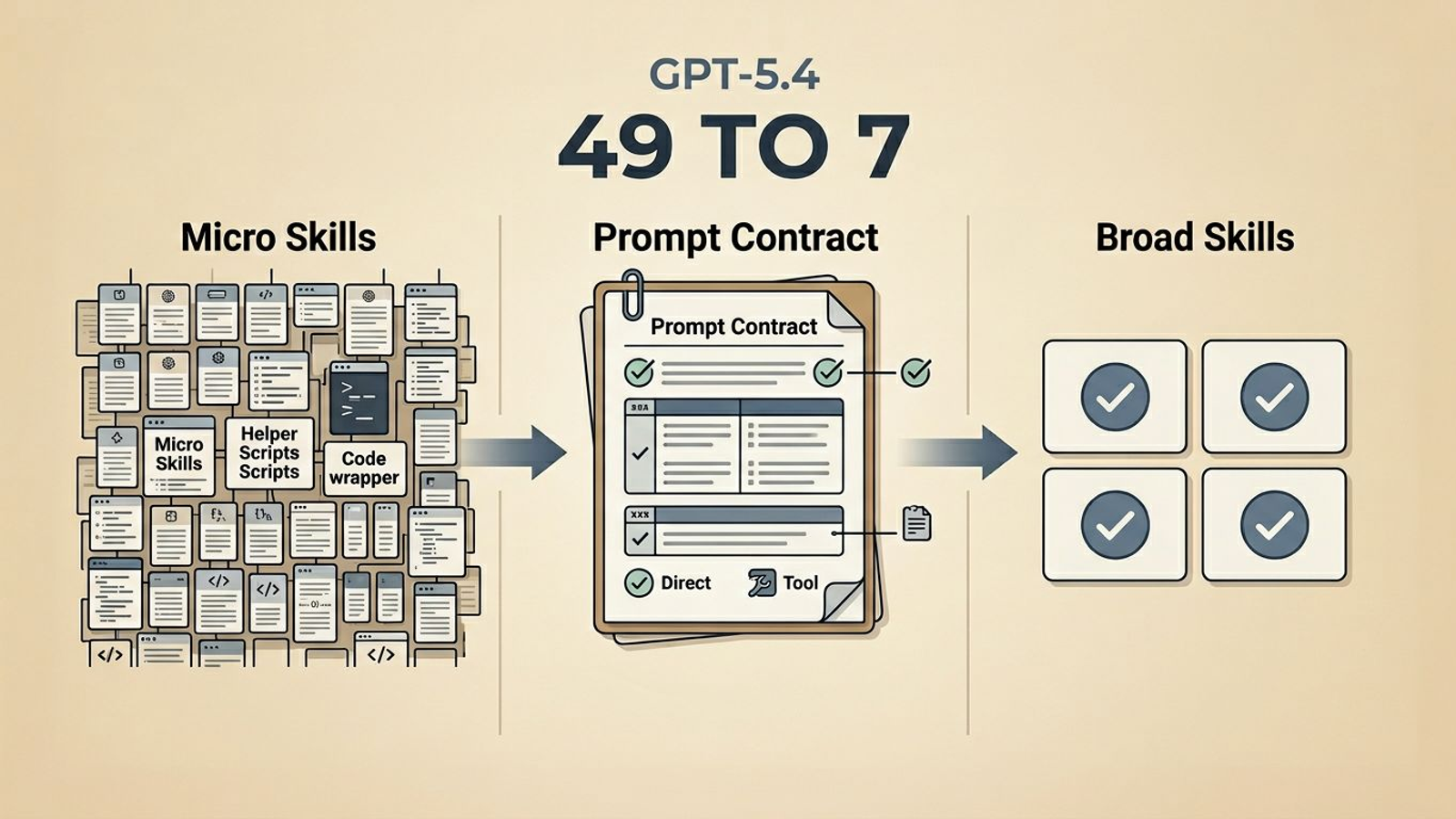

The Codex use case page is not selling a vague "AI builds iOS apps" fantasy. It gives a very specific framing: use Codex to identify the actions and entities your app should expose, wire them into Shortcuts and Spotlight, keep the first pass small, and validate that the app still builds and routes correctly.

That is the right framing because App Intents work usually dies in the same place:

- teams know they should do it

- the app already has good actions and objects

- nobody wants to spend a sprint on the boilerplate, naming, display representations, entity queries, and routing cleanup

That is exactly the sort of work coding agents are good at.

It is structured work. It has a clear contract. It benefits from reading existing Swift types and navigation flow. It needs taste, but it does not need a human typing every line by hand.

OpenAI's April 16, 2026 Codex update makes that fit even tighter. Codex now has computer use on macOS, an in-app browser, more plugins, memory, automations, and image work. My inference is that App Intents becomes a natural target in that environment because the agent can inspect the app, read the model layer, trace navigation, write Swift, and then test whether the result still builds.

That is a much better loop than "remember to add Siri support later."

This is the kind of prompt I would hand the agent

The best first pass is small and concrete.

Audit this iOS app for App Intents.

Start with three actions that are useful outside the full UI:

1. one open-app navigation action

2. one create or update action

3. one find or open action tied to a real entity

Define the smallest entity surface that Shortcuts, Siri, and Spotlight actually need.

Add App Shortcuts where the action should be discoverable.

Keep routing clean inside the existing app architecture.

Build after the first pass and report what system surfaces are now unlocked.

That is enough to get real progress without turning the agent loose on your whole model layer.

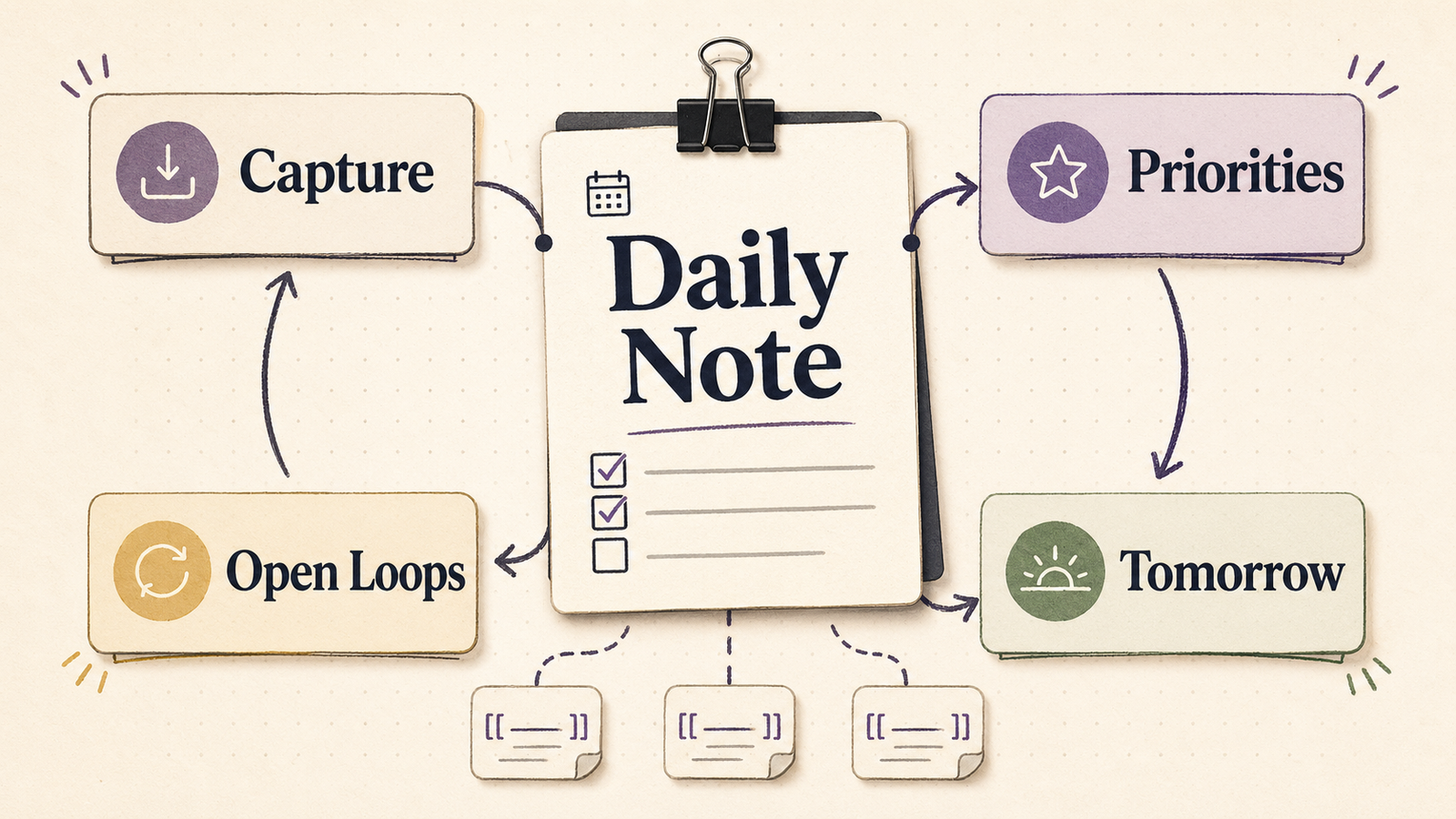

The first release should stay boring

I would not start with a grand assistant architecture.

I would start with three things:

- A foreground intent that opens a high-value screen or workflow.

- A background-safe intent that creates, updates, or toggles something useful.

- A real

AppEntityplus query so Spotlight and Shortcuts can find the thing by name.

In code, the first intent is often this simple:

struct OpenFavoritesIntent: AppIntent {

static let title: LocalizedStringResource = "Open Favorites"

static let openAppWhenRun = true

@MainActor

func perform() async throws -> some IntentResult {

Navigator.shared.open(.favorites)

return .result()

}

}

The hard part is almost never the perform() body. The hard part is choosing good actions, naming them clearly, and exposing just enough entity structure for the system to do something useful.

That is where App Intents stops being boilerplate and starts being product design.

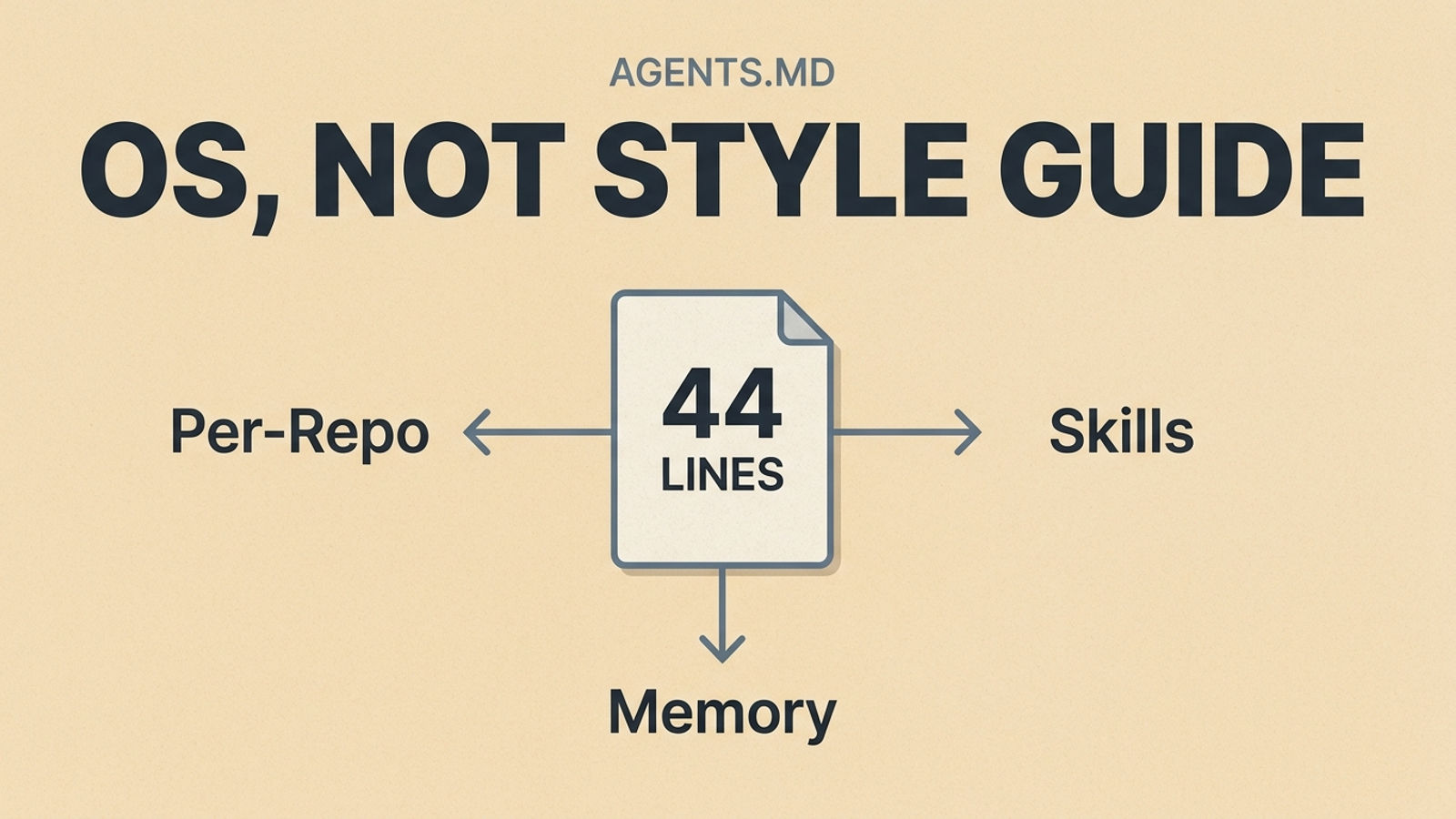

A first-week checklist

If I were starting this on a live app next week, I would keep the first pass this tight:

- list the three verbs people most often want outside the full UI

- expose one real entity with a clear display representation and query path

- make the App Shortcut phrases sound like something a person would actually say

- run the result from Shortcuts, Spotlight, and Siri before adding more surface

- only after that, expand into deeper indexing, URL representations, or assistant schemas

Why structured verbs and nouns win

There is a deeper reason this matters.

App Intents is not just an integration API. It is a schema for your app's capabilities.

Apple's WWDC25 Shortcuts and Spotlight session makes this plain: Spotlight on Mac can now run actions directly, parameter summaries have to be good enough to run from search, and the new "Use Model" action can reason over your app entities. That only works if you expose the right properties in the first place.

So the future assistant stack is not built from screen scraping first. It is built from structured app semantics:

- verbs the system can invoke

- nouns the system can identify

- properties the system can search

- links the system can route back into the app

That is why App Intents is such an important surface right now. It gives the system something cleaner than taps and screenshots to work with.

My take

If I were shipping an iOS app in April 2026, I would treat App Intents as one of the shortest paths to making the app feel native to the next wave of Siri, Spotlight, and assistant-driven workflows.

Apple has spent four years widening the surface. OpenAI is now explicitly telling Codex users to go implement it. Those two signals line up cleanly.

The apps that benefit most from better assistants will not be the ones with the fanciest demo video. They will be the ones whose actions and entities are already described well enough for the system to call them by name.

That work used to feel annoying enough to postpone.

Now it looks cheap enough to ship.

If you want the broader prompt-contract angle behind how I think about coding agents, Why I Deleted Most of My Skills for GPT-5.4 is the companion piece. If you want help turning that kind of assistant-facing surface into a real workflow, the workflow build is where I do that work.